37,744

社区成员

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

分享# -*- coding:utf-8 -*-

import requests

from bs4 import BeautifulSoup

import os

import sys

reload(sys)

sys.setdefaultencoding('utf-8')

#网页下载到本地后的方式(把网址保存为Honolulu.htm)

import codecs

from lxml import etree

f=codecs.open("Honolulu.htm","r","utf-8")

content=f.read()

f.close()

tree=etree.HTML(content)

tourist_attractions = tree.xpath(u'//*[@*="Tourist attractions"]//li')

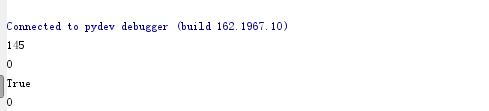

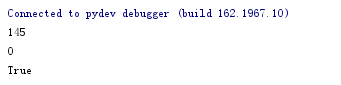

print len(tourist_attractions)

#网页不在本地

myheaders = {'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:50.0) Gecko/20100101 Firefox/50.0'}

foreign_born_link = "http://www.city-data.com/city/Honolulu-Hawaii.html"

res = requests.get(foreign_born_link ,headers = myheaders)

res.encoding = 'utf-8'

soup = BeautifulSoup(res.text,'html5lib')

treex = etree.HTML(soup.text)

tourist_attractions = treex.xpath(u'//*[@*="Tourist attractions"]//li')

print len(tourist_attractions)

print type(soup.text)==type(content)

myheaders = {'User-Agent':'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:50.0) Gecko/20100101 Firefox/50.0'}

foreign_born_link = "http://www.zengyuetian.com/?p=2182"

res = requests.get(foreign_born_link ,headers = myheaders)

res.encoding = 'utf-8'

soup = BeautifulSoup(res.text,'html5lib')

treex = etree.HTML(soup.text)

# tourist_attractions = treex.xpath(u'//*[@id="content"]/div[2]/div[2]/div[2]')

#上面是我用chrom获取的xpath,居然也爬取不到

tourist_attractions = treex.xpath(u'//*[@class="entry_title"]')

print len(tourist_attractions)