16,472

社区成员

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

分享

int CVideoWnd::ConnectRealPlay(DEV_INFO *pDev, int nChannel, bool bOsd)

{

if(m_iPlayhandle != -1)

{

//H264_DVR_DelRealDataCallBack(m_iPlayhandle, RealDataCallBack, (long)this);

H264_DVR_DelRealDataCallBack_V2(m_iPlayhandle, RealDataCallBack_V2, (long)this);

if(!H264_DVR_StopRealPlay(m_iPlayhandle))

{

TRACE("H264_DVR_StopRealPlay fail m_iPlayhandle = %d", m_iPlayhandle);

}

}

if ( m_nPlaydecHandle == -1 )

{

//open decoder

BYTE byFileHeadBuf;

if (H264_PLAY_OpenStream(m_nIndex, &byFileHeadBuf, 1, SOURCE_BUF_MIN*50))

{

//设置信息帧回调

H264_PLAY_SetInfoFrameCallBack(m_nIndex, videoInfoFramCallback,(DWORD)this);

//叠加osd信息

if ( bOsd )

{

OSD_INFO_TXT osd;

osd.bkColor = RGB(0,255,0);

osd.color = RGB(255,0,0);

osd.pos_x = 10;

osd.pos_y = 10;

osd.isTransparent = 0;

strcpy(osd.text, "test osd info");

H264_PLAY_SetOsdTex(m_nIndex, &osd);

OSD_INFO_TXT osd2;

osd2.bkColor = RGB(255,0,0);

osd2.color = RGB(0,255,0);

osd2.pos_x = 10;

osd2.pos_y = 40;

osd2.isTransparent = 0;

osd2.isBold = 1;

strcpy(osd2.text, "test222 osd info");

H264_PLAY_SetOsdTex(m_nIndex, &osd2);

//设置osd叠加回调

H264_PLAY_RigisterDrawFun(m_nIndex, drawOSDCall, (DWORD)this);

}

H264_PLAY_SetStreamOpenMode(m_nIndex, STREAME_REALTIME);

if ( H264_PLAY_Play(m_nIndex, this->m_hWnd) )

{

H264_PLAY_SetDisplayCallBack(m_nIndex, pProc, (LONG)this);

m_nPlaydecHandle = m_nIndex;

}

}

}

H264_DVR_CLIENTINFO playstru;

playstru.nChannel = nChannel;

playstru.nStream = 0; //主码流

playstru.nMode = 0; //TCP方式

m_iPlayhandle = H264_DVR_RealPlay(pDev->lLoginID, &playstru);

if(m_iPlayhandle <= 0 )

{

DWORD dwErr = H264_DVR_GetLastError();

CString sTemp("");

sTemp.Format("access %s channel%d fail, dwErr = %d",pDev->szDevName,nChannel, dwErr);

MessageBox(sTemp);

}

else

{

//set callback to decode receiving data

//H264_DVR_SetRealDataCallBack(m_iPlayhandle, RealDataCallBack, (long)this);

H264_DVR_MakeKeyFrame(pDev->lLoginID, nChannel, 0);

H264_DVR_SetRealDataCallBack_V2(m_iPlayhandle, RealDataCallBack_V2, (long)this);

}

m_lLogin = pDev->lLoginID;

m_iChannel = nChannel;

return m_iPlayhandle;

}

只有一个回调函数,说明是用来处理客户端数据,哎,我的头发哗哗的掉啊[/quote]

只有一个回调函数,说明是用来处理客户端数据,哎,我的头发哗哗的掉啊[/quote]

你没有SDK说明文档吗?

你没有SDK说明文档吗?

[/quote]

找客服要到说明文档了,可是还是找不到缓冲区地址,555555

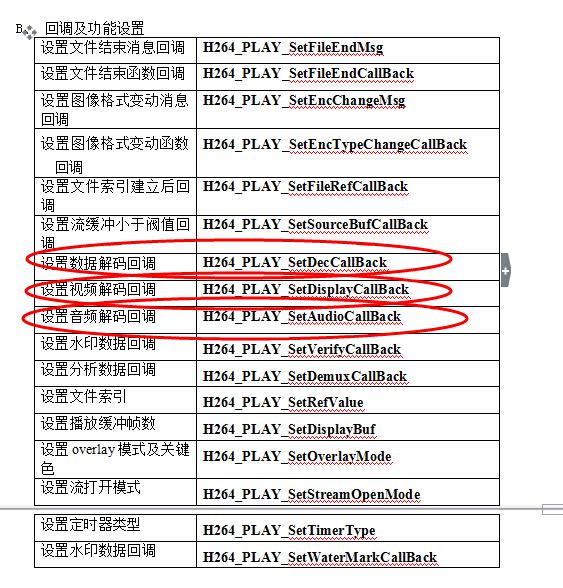

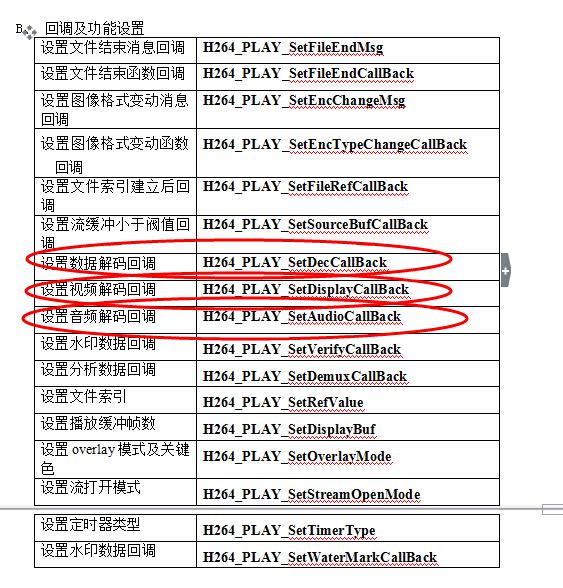

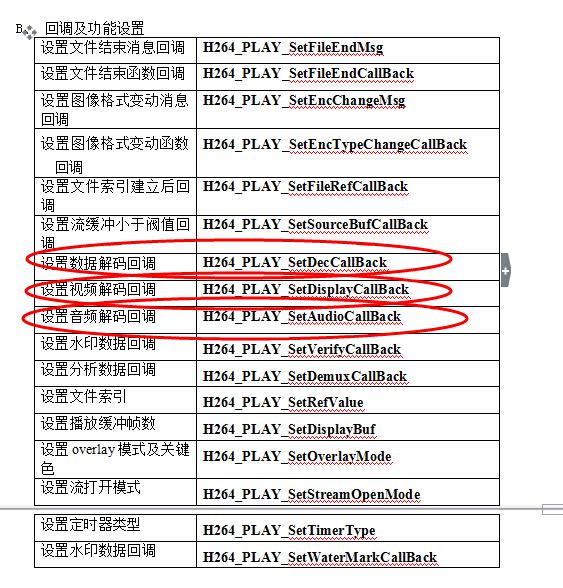

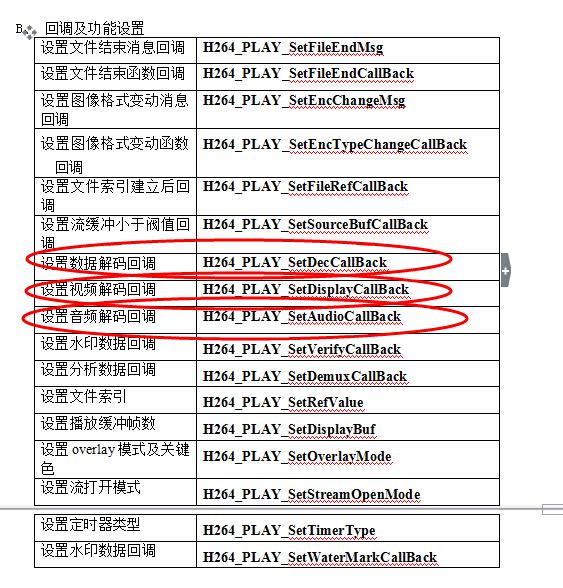

有一个函数的说明是这样的:

H264_PLAY_SetDisplayCallBack设置的视频数据回调函数,只有在有视频数据解码出来时才调用,并由用户处理视频数据(如抓图),如果不断有解码的数据,就不断调用这个回调函数。

其函数原型为

[/quote]

找客服要到说明文档了,可是还是找不到缓冲区地址,555555

有一个函数的说明是这样的:

H264_PLAY_SetDisplayCallBack设置的视频数据回调函数,只有在有视频数据解码出来时才调用,并由用户处理视频数据(如抓图),如果不断有解码的数据,就不断调用这个回调函数。

其函数原型为WORD H264_PLAY_SetDisplayCallback(

LONG nPort,

DisplayCallBack pDisplayProc,

LONG nUser)H264_PLAY_SetDisplayCallBack(m_nIndex, pProc, (LONG)this);void __stdcall pProc(LONG nPort,LPCSTR pBuf,LONG nSize,LONG nWidth,LONG nHeight, LONG nStamp,LONG nType,LONG nUser)

{

} 只有一个回调函数,说明是用来处理客户端数据,哎,我的头发哗哗的掉啊[/quote]

只有一个回调函数,说明是用来处理客户端数据,哎,我的头发哗哗的掉啊[/quote]

你没有SDK说明文档吗?

你没有SDK说明文档吗?

[/quote]

找客服要到说明文档了,可是还是找不到缓冲区地址,555555

有一个函数的说明是这样的:

H264_PLAY_SetDisplayCallBack设置的视频数据回调函数,只有在有视频数据解码出来时才调用,并由用户处理视频数据(如抓图),如果不断有解码的数据,就不断调用这个回调函数。

其函数原型为

[/quote]

找客服要到说明文档了,可是还是找不到缓冲区地址,555555

有一个函数的说明是这样的:

H264_PLAY_SetDisplayCallBack设置的视频数据回调函数,只有在有视频数据解码出来时才调用,并由用户处理视频数据(如抓图),如果不断有解码的数据,就不断调用这个回调函数。

其函数原型为WORD H264_PLAY_SetDisplayCallback(

LONG nPort,

DisplayCallBack pDisplayProc,

LONG nUser)H264_PLAY_SetDisplayCallBack(m_nIndex, pProc, (LONG)this);void __stdcall pProc(LONG nPort,LPCSTR pBuf,LONG nSize,LONG nWidth,LONG nHeight, LONG nStamp,LONG nType,LONG nUser)

{

} 只有一个回调函数,说明是用来处理客户端数据,哎,我的头发哗哗的掉啊[/quote]

只有一个回调函数,说明是用来处理客户端数据,哎,我的头发哗哗的掉啊[/quote]

你没有SDK说明文档吗?

你没有SDK说明文档吗?

[/quote]

找客服要到说明文档了,可是还是找不到缓冲区地址,555555

有一个函数的说明是这样的:

H264_PLAY_SetDisplayCallBack设置的视频数据回调函数,只有在有视频数据解码出来时才调用,并由用户处理视频数据(如抓图),如果不断有解码的数据,就不断调用这个回调函数。

其函数原型为

[/quote]

找客服要到说明文档了,可是还是找不到缓冲区地址,555555

有一个函数的说明是这样的:

H264_PLAY_SetDisplayCallBack设置的视频数据回调函数,只有在有视频数据解码出来时才调用,并由用户处理视频数据(如抓图),如果不断有解码的数据,就不断调用这个回调函数。

其函数原型为WORD H264_PLAY_SetDisplayCallback(

LONG nPort,

DisplayCallBack pDisplayProc,

LONG nUser)H264_PLAY_SetDisplayCallBack(m_nIndex, pProc, (LONG)this);void __stdcall pProc(LONG nPort,LPCSTR pBuf,LONG nSize,LONG nWidth,LONG nHeight, LONG nStamp,LONG nType,LONG nUser)

{

} 只有一个回调函数,说明是用来处理客户端数据,哎,我的头发哗哗的掉啊

只有一个回调函数,说明是用来处理客户端数据,哎,我的头发哗哗的掉啊

typedef struct

{

int nPacketType; // 包类型,见MEDIA_PACK_TYPE

char* pPacketBuffer; // 缓存区地址

unsigned int dwPacketSize; // 包的大小

// 绝对时标

int nYear; // 时标:年

int nMonth; // 时标:月

int nDay; // 时标:日

int nHour; // 时标:时

int nMinute; // 时标:分

int nSecond; // 时标:秒

unsigned int dwTimeStamp; // 相对时标低位,单位为毫秒

unsigned int dwTimeStampHigh; //相对时标高位,单位为毫秒

unsigned int dwFrameNum; //帧序号

unsigned int dwFrameRate; //帧率

unsigned short uWidth; //图像宽度

unsigned short uHeight; //图像高度

unsigned int Reserved[6]; //保留

} PACKET_INFO_EX;