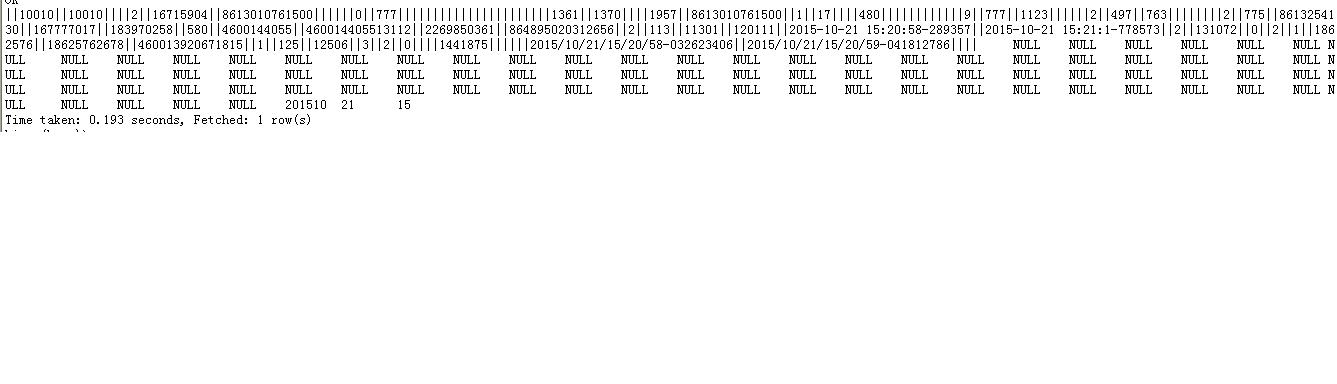

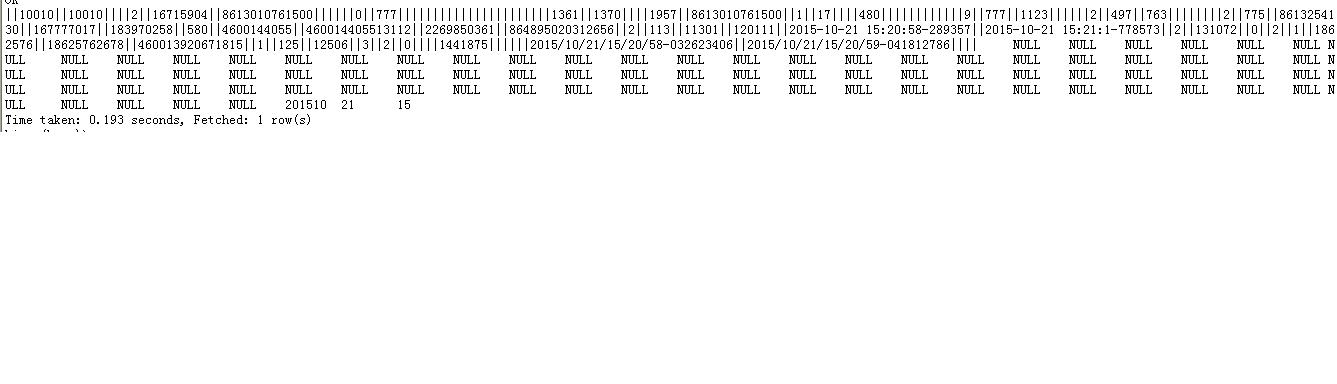

文本列之间分隔符为“||”

以下是java代码(参考网上的代码):

package com.pack.conf;

import java.io.IOException;

import java.util.List;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapred.FileSplit;

import org.apache.hadoop.mapred.InputSplit;

import org.apache.hadoop.mapred.JobConf;

import org.apache.hadoop.mapred.JobConfigurable;

import org.apache.hadoop.mapred.LineRecordReader;

import org.apache.hadoop.mapred.RecordReader;

import org.apache.hadoop.mapred.Reporter;

import org.apache.hadoop.mapred.TextInputFormat;

public class MCInputFormat extends TextInputFormat{

public RecordReader<LongWritable, Text> getRecordReader(

InputSplit genericSplit, JobConf job, Reporter reporter)

throws IOException {

reporter.setStatus(genericSplit.toString());

return new LineRecordReader(job, (FileSplit) genericSplit);

}

public static class MCRecordReader implements

RecordReader<LongWritable, Text> {

LineRecordReader reader;

Text text;

public MCRecordReader(LineRecordReader reader){

this.reader = reader;

text = reader.createValue();

}

@Override

public void close() throws IOException {

reader.close();

}

@Override

public LongWritable createKey() {

return reader.createKey();

}

@Override

public Text createValue() {

return new Text();

}

@Override

public long getPos() throws IOException {

return reader.getPos();

}

@Override

public float getProgress() throws IOException {

return reader.getProgress();

}

@Override

public synchronized boolean next(LongWritable key, Text value) throws IOException {

while (reader.next(key, text)) {

String strReplace = text.toString().toLowerCase().replaceAll("\\|\\|", "\001");

Text txtReplace = new Text();

txtReplace.set(strReplace);

value.set(txtReplace.getBytes(), 0, txtReplace.getLength());

return true;

}

return false;

}

}

}

按照教程说的将jar包入到了hive的lib目录下,然后在Hive里面创建表时

这样指定了输入方式和输出方式,

.......

stored as INPUTFORMAT 'com.pack.conf.MCInputFormat' OUTPUTFORMAT 'org.apache.hadoop.hive.ql.io.HiveIgnoreKeyTextOutputFormat' ;

但是在Hive里查询该表时,发现并没有成功分隔文本,

所以想请教一下,是哪里出了问题呢?

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

分享