显示错误:

pyspark.sql.utils.AnalysisException: 'org.apache.hadoop.hive.ql.metadata.HiveException: java.lang.RuntimeException: MetaException(message:org.apache.hadoop.hive.serde2.SerDeException org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe: columns has 13 elements while columns.types has 7 elements!);'

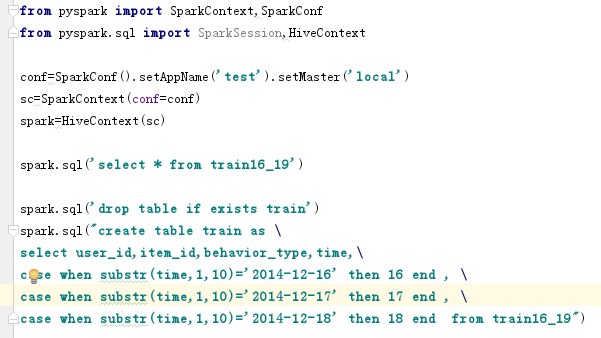

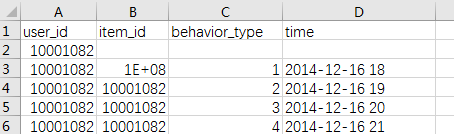

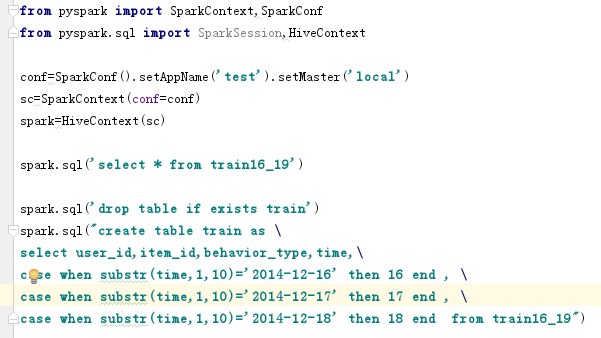

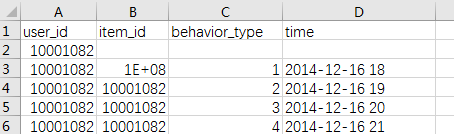

图一为我的程序,图二为table:train16_19

我想修改 time 那一列,用日代替年月日时,如:2014-12-16 18 改为16

请各位指点一下,感谢!

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

分享