37,741

社区成员

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

分享import random

import requests

import threading

import Queue

import bs4

import time

class SpiderMan(threading.Thread):

def __init__(self,queue):

threading.Thread.__init__(self)

self.queue=queue

def run(self):

while not self.queue.empty():

page=self.queue.get()

spider(page)

time.sleep(0.1)

else:

print time.ctime()

def spider(page):

print page

url=("http://jandan.net/ooxx/page-%s#comments")%(page)

print url

myheader={

'Host': 'jandan.net',

'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:53.0) Gecko/20100101 Firefox/53.0',

'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

'Accept-Language': 'zh-CN,zh;q=0.8,en-US;q=0.5,en;q=0.3',

'Accept-Encoding': 'gzip, deflate',

}

rr=requests.get(url,headers=myheader)

mydom=bs4.BeautifulSoup(rr.content)

#print mydom

alist=mydom.find_all("img")

for i in alist:

DownloadJpg("http:"+i['src'],random.choice('abcdefghijklmn')+str(int(time.time())))

def main():

queue=Queue.Queue()

for i in range(1,96):

queue.put(i)

threadlist=[]

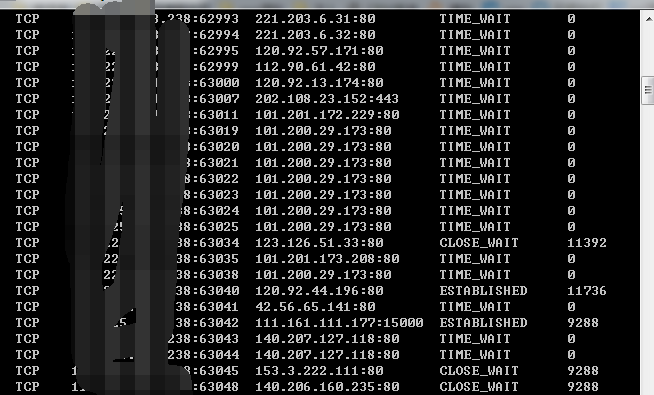

threadnum=50

for i in range(threadnum):

t=SpiderMan(queue)

threadlist.append(t)

t.start()

for i in threadlist:

i.join()

def DownloadJpg(url,name):

try:

f = requests.get(url)

print url

with open(("img1\%s.gif")%(name), "wb") as code:

code.write(f.content)

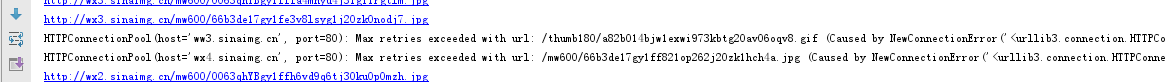

except Exception,e:

print str(e)

pass

if __name__ == '__main__':

main()