Greenplum 5.1.0介绍

Pivotal Greenplum是基于MPP架构的数据库产品,它可以满足下一代数据仓库对大规模的分析任务的需求。通过自动对数据进行分区以及多节点并行执行查询等方式,Greenplum使一个包含上百节点的数据库集群运行起来就像单机版本的传统数据库一样简单可靠,同时提供了几十倍甚至上百倍的性能提升。除了传统的SQL,Greenplum还支持MapReduce,文本索引,存储过程等很多分析工具。

Greenplum 5.1.0可以从这里下载(https://network.pivotal.io/products),文档在这里(https://gpdb.docs.pivotal.io/510/main/index.html),主页在这里(http://greenplum.org/),源代码在github(https://github.com/greenplum-db/gpdb)。

新特性支持

增强了GPORCA对短查询的性能优化

Greenplum 5.1.0 中,对不需要估计的字段跳过统计数据查询和生成,降低了优化耗时,对短查询性能提升明显。在之前的版本中,即使只需要字段的宽度(width)信息,GPORCA也会查询字段的其他统计数据。

提升了GPORCA优化器性能

Greenplum 5.1.0 新增了以下 GPORCA 性能增强特性

1. 对于大量表的关联操作生成执行计划时,GPORCA减少了参与估计的最大join组合的数目,这一改进对查询性能影响甚微,却大大降低了优化时间。在之前的版本中,GPORCA会对每一个可能出现的join组合进行估计来确定最优的方案,从而花费更长的时间来生成执行计划

2. 对于包含关联子查询(correlated subquery)并且子查询包含窗口函数的查询,GPORCA会为其生成基于join的更有效的查询计划。

GPORCA可以支持分区表子节点的索引

在Greenplum 5.1.0中,如果一个分区表的数据子节点包含了索引,GPORCA在生成针对这个数据子节点的查询计划时,会利用这个索引信息;之前的版本不会使用叶子节点的索引。

支持表与外部程序之间的COPY操作

Greenplum 5.1.0支持了Postgres 9.3中的copy to/from program功能。用户可以指定外部命令,在每个segment上并行运行,处理COPY命令的输出或者输入数据给COPY命令。

gptransfer支持了SHA-256数据校验

Greenplum 5.1.0中,gptransfer在传输数据时会根据数据的SHA265进行校验。当操作系统启用了FIPS以后,md5校验被认为是不安全的算法,因此gptransfer使用更高级的SHA265校验算法。

提升了gprecoverseg的性能

Greenplum 5.1.0中,当segment节点有大量文件时,对其进行gprecoverseg操作时,性能有大幅提升。

增加了新的外部数据引擎PXF

Greenplum 5.1.0中引进新的外部数据框架PXF(Pivotal extention framework),它部署在每个运行Segment的物理机器上,提供了对HDFS文件系统以及HIVE的支持。PXF对外部数据提供了抽象的接口,可以方便的支持各种数据源。

试验特性

除了正式支持的功能外,Greenplum 5.1.0还包含了下面几个试验特性:

Recursive CTE

CTE(Common Table Expressin)定义了一个可以在同一个查询里重复使用的临时表,可以大大简化SQL语句。Greenplum 5.1.0中CTE定义支持了recursive关键字,从而允许在CTE定义是可以递归的引用自己。

基于Resource group的资源管理

Resource group是Greenplum的下一代资源管理框架,可以用来管理并发查询的数量,以及每个查询允许使用的CPU和内存的限制。Greenplum 5.1.0默认仍使用老版本的资源管理机制,可以通修改gp_resource_manager为"group"来试用新的Resource group功能。

Pgadmin4支持

Greenplum 5.1.0 兼容了PGadmin4,用户可以通过PGAdmin4来查询浏览Greenplum表(包括AO表)以及DDL信息。

Greenplum 5.1.0的扩展组件

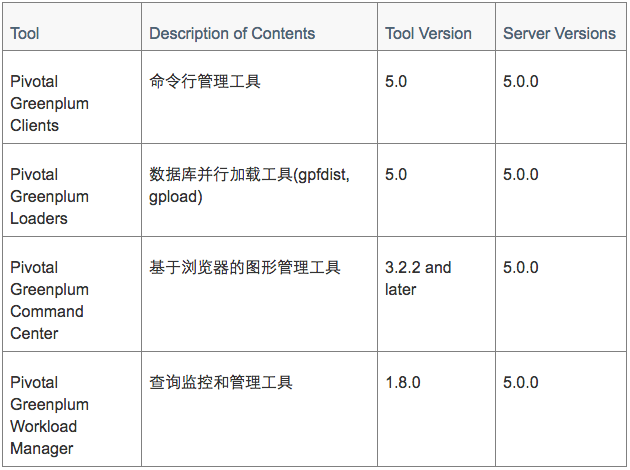

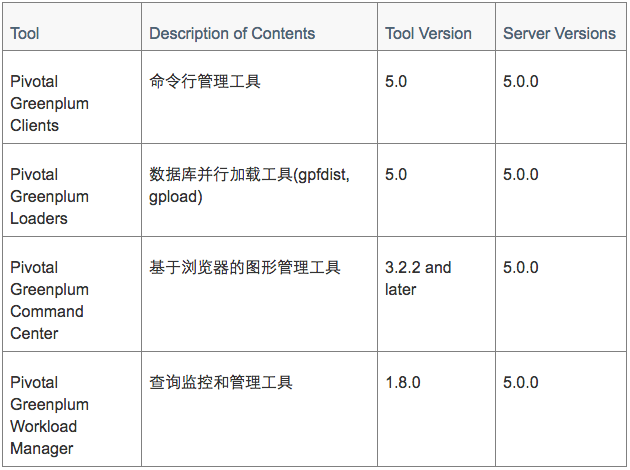

Client端工具

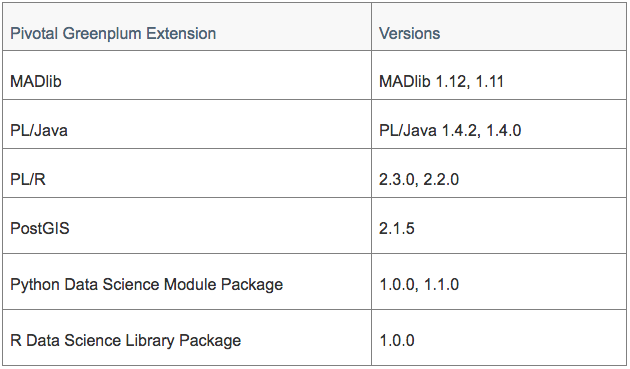

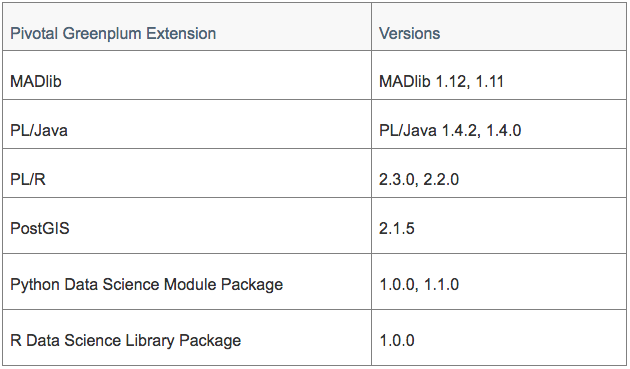

扩展模块

扩展模块

其他扩展

·

其他扩展

· PXF Extension Framework

Greenplum 5.1.0中引进新的外部数据框架PXF(Pivotal extention framework),它部署在每个运行Segment的物理机器上,提供了对HDFS文件系统以及HIVE的支持。PXF对外部数据提供了抽象的接口,可以方便的支持各种数据源。

· Greenplum-Spark Connector

支持Greenplum与Spark之间的高速并行数据传输。

· Pivotal GPText

Pivotal Greenplum Database 5.1.0 可以支持 GPText version 2.1.3 及以后的版本。GPText是Greenplum提供的文本搜索引擎,可以支持全文检索及文本分析功能。

Greenplum 5.1.0支持的平台

Greenplum的服务器支持如下平台

· Red Hat Enterprise Linux 64-bit 7.x

· Red Hat Enterprise Linux 64-bit 6.x

· SuSE Linux Enterprise Server 64-bit 11 SP4

· CentOS 64-bit 7.x

· CentOS 64-bit 6.x

Greenplum的java组件依赖java的如下版本

· 8.xxx

· 7.xxx

Greenplum运行时需要如下的依赖包

· OpenSSL 1.0.2l (with FIPS 2.0.16)

· cURL 7.54

· OpenLDAP 2.4.44

· Python 2.7.12

Client端工具可以支持如下平台

· Red Hat Enterprise Linux 64-bit 7.x

· Red Hat Enterprise Linux 64-bit 6.x

· SuSE Linux Enterprise Server 64-bit 11 SP4

· CentOS 64-bit 7.x

· CentOS 64-bit 6.x

· Windows

· AIX

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

分享