20,844

社区成员

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

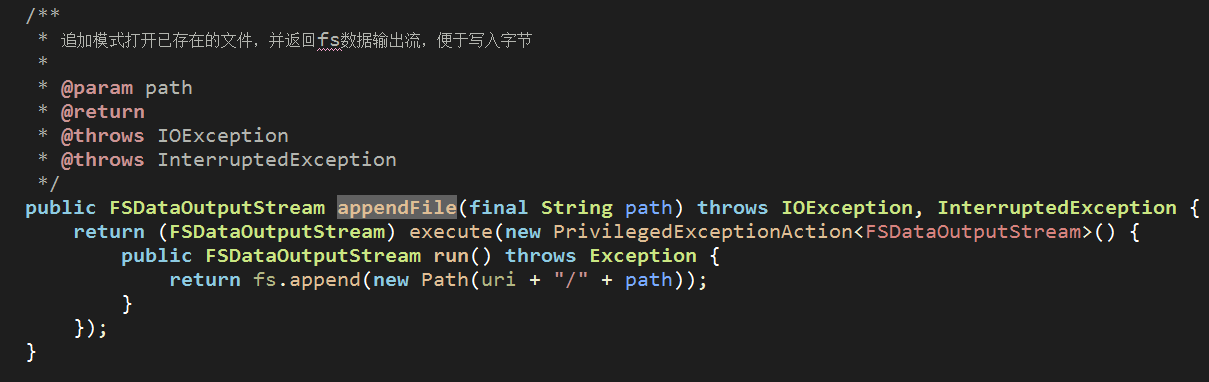

分享/**以行追加的方式写数据到hdfs

* @param str 文件内容

* @param hdfs hdfs文件路径

* @throws ClassNotFoundException

* @throws IOException

*/

public static void WriteFile(String str, String hdfs) throws ClassNotFoundException, IOException {

InputStream in = new ByteArrayInputStream(str.getBytes("UTF-8"));

Configuration conf = new Configuration();

conf.setBoolean("dfs.support.append", true);

conf.set("dfs.client.block.write.replace-datanode-on-failure.policy",

"NEVER"

);

conf.set("dfs.client.block.write.replace-datanode-on-failure.enable",

"true"

);

FileSystem fs = null;

try {

fs = FileSystem.get(URI.create(hdfs),conf);

Path path = new Path(hdfs);

OutputStream fo = null;

try {

fo = fs.append(path);

}catch(Exception e) {

fo = fs.create(path);

}

IOUtils.copyBytes(in, fo, 4096, true);

in.close();

fo.close();

System.out.println("write file success...");

}catch(Exception e) {

e.printStackTrace();

}

}<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>file:/home/hadoop/tmp/dfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>file:/home/hadoop/tmp/dfs/data</value>

</property>

</configuration><configuration>

<property>

<name>hadoop.tmp.dir</name>

<value>file:/home/hadoop/tmp</value>

<description>Abase for other temporary directories.</description>

</property>

<property>

<name>fs.defaultFS</name>

<value>hdfs://node3:9000</value>

</property>

</configuration>