568

社区成员

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

分享CNNNetwork InferenceEngine::Core::ReadNetwork(

const std::string & modelPath,

const std::string & binPath = {}

) constmodel = torchvision.models.resnet18(pretrained=True).eval()

dummy_input = torch.randn((1, 3, 224, 224))

torch.onnx.export(model, dummy_input, "resnet18.onnx")

ExecutableNetwork InferenceEngine::Core::LoadNetwork(

const CNNNetwork & network,

const std::string & deviceName,

const std::map< std::string, std::string > & config = {}

)

from __future__ import print_function

import cv2

import numpy as np

import time

import logging as log

from openvino.inference_engine import IECore

with open('imagenet_classes.txt') as f:

labels = [line.strip() for line in f.readlines()]

def image_classification(use_onnx=False):

model_xml = "resnet18.xml"

model_bin = "resnet18.bin"

onnx_model = "resnet18.onnx"

# Plugin initialization for specified device and load extensions library if specified

log.info("Creating Inference Engine")

ie = IECore()

# Read IR

log.info("Loading network files:\n\t{}\n\t{}".format(model_xml, model_bin))

inf_start = time.time()

if use_onnx:

# 直接使用ONNX格式加载

net = ie.read_network(model=onnx_model)

else:

# IR 格式加载

net = ie.read_network(model=model_xml, weights=model_bin)

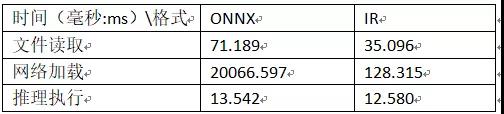

load_time = time.time() - inf_start

print("read network time(ms) : %.3f"%(load_time*1000))

log.info("Preparing input blobs")

input_blob = next(iter(net.input_info))

out_blob = next(iter(net.outputs))

# Read and pre-process input images

n, c, h, w = net.input_info[input_blob].input_data.shape

src = cv2.imread("D:/images/messi.jpg")

# image = cv2.dnn.blobFromImage(src, 0.00375, (w, h), (123.675, 116.28, 103.53), True)

image = cv2.resize(src, (w, h))

image = np.float32(image) / 255.0

image[:, :, ] -= (np.float32(0.485), np.float32(0.456), np.float32(0.406))

image[:, :, ] /= (np.float32(0.229), np.float32(0.224), np.float32(0.225))

image = image.transpose((2, 0, 1))

# Loading model to the plugin

log.info("Loading model to the plugin")

start_load = time.time()

exec_net = ie.load_network(network=net, device_name="CPU")

end_load = time.time() - start_load

print("load time(ms) : %.3f" % (end_load * 1000))

# Start sync inference

log.info("Starting inference in synchronous mode")

inf_start1 = time.time()

res = exec_net.infer(inputs={input_blob: [image]})

inf_end1 = time.time() - inf_start1

print("infer onnx as network time(ms) : %.3f" % (inf_end1 * 1000))

# Processing output blob

log.info("Processing output blob")

res = res[out_blob]

label_index = np.argmax(res, 1)

label_txt = labels[label_index[0]]

inf_end = time.time()

det_time = inf_end - inf_start1

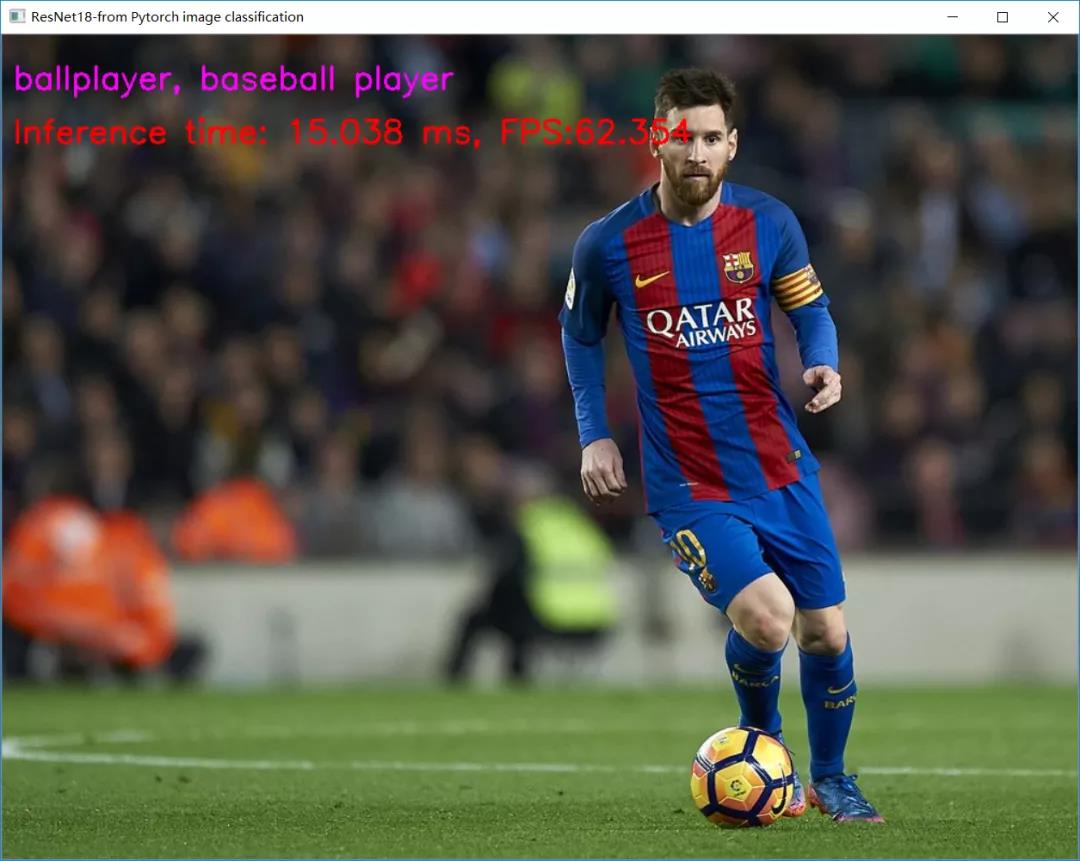

inf_time_message = "Inference time: {:.3f} ms, FPS:{:.3f}".format(det_time * 1000, 1000 / (det_time * 1000 + 1))

cv2.putText(src, label_txt, (10, 50), cv2.FONT_HERSHEY_SIMPLEX, 1.0, (255, 0, 255), 2, 8)

cv2.putText(src, inf_time_message, (10, 100), cv2.FONT_HERSHEY_SIMPLEX, 1.0, (0, 0, 255), 2, 8)

cv2.imshow("ResNet18-from Pytorch image classification", src)

cv2.waitKey(0)

cv2.destroyAllWindows()

if __name__ == '__main__':

image_classification(True)