589

社区成员

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

分享cudaEvent_t start,stop;//事件对象

cudaEventCreate(&start);//创建事件

cudaEventCreate(&stop);//创建事件

cudaEventRecord(start,stream);//记录开始

myKernel<<<dimg,dimb,size_smem,stream>>>(parameter list);//执行核函数

cudaEventRecord(stop,stream);//记录结束事件

cudaEventSynchronize(stop);//事件同步,等待结束事件之前的设备操作均已完成

float elapsedTime;

cudaEventElapsedTime(&elapsedTime,start,stop);//计算两个事件之间时长(单位为ms)

#include "cuda_runtime.h"

#include "device_launch_parameters.h"

#include <stdio.h>

cudaError_t addWithCuda(int *c, const int *a, const int *b, size_t size);

__global__ void addKernel_blk(int *c, const int *a, const int *b)

{

int i = blockIdx.x;

c[i] = a[i]+ b[i];

}

__global__ void addKernel_thd(int *c, const int *a, const int *b)

{

int i = threadIdx.x;

c[i] = a[i]+ b[i];

}

int main()

{

const int arraySize = 1024;

int a[arraySize] = {0};

int b[arraySize] = {0};

for(int i = 0;i<arraySize;i++)

{

a[i] = i;

b[i] = arraySize-i;

}

int c[arraySize] = {0};

// Add vectors in parallel.

cudaError_t cudaStatus;

int num = 0;

cudaDeviceProp prop;

cudaStatus = cudaGetDeviceCount(&num);

for(int i = 0;i<num;i++)

{

cudaGetDeviceProperties(&prop,i);

}

cudaStatus = addWithCuda(c, a, b, arraySize);

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "addWithCuda failed!");

return 1;

}

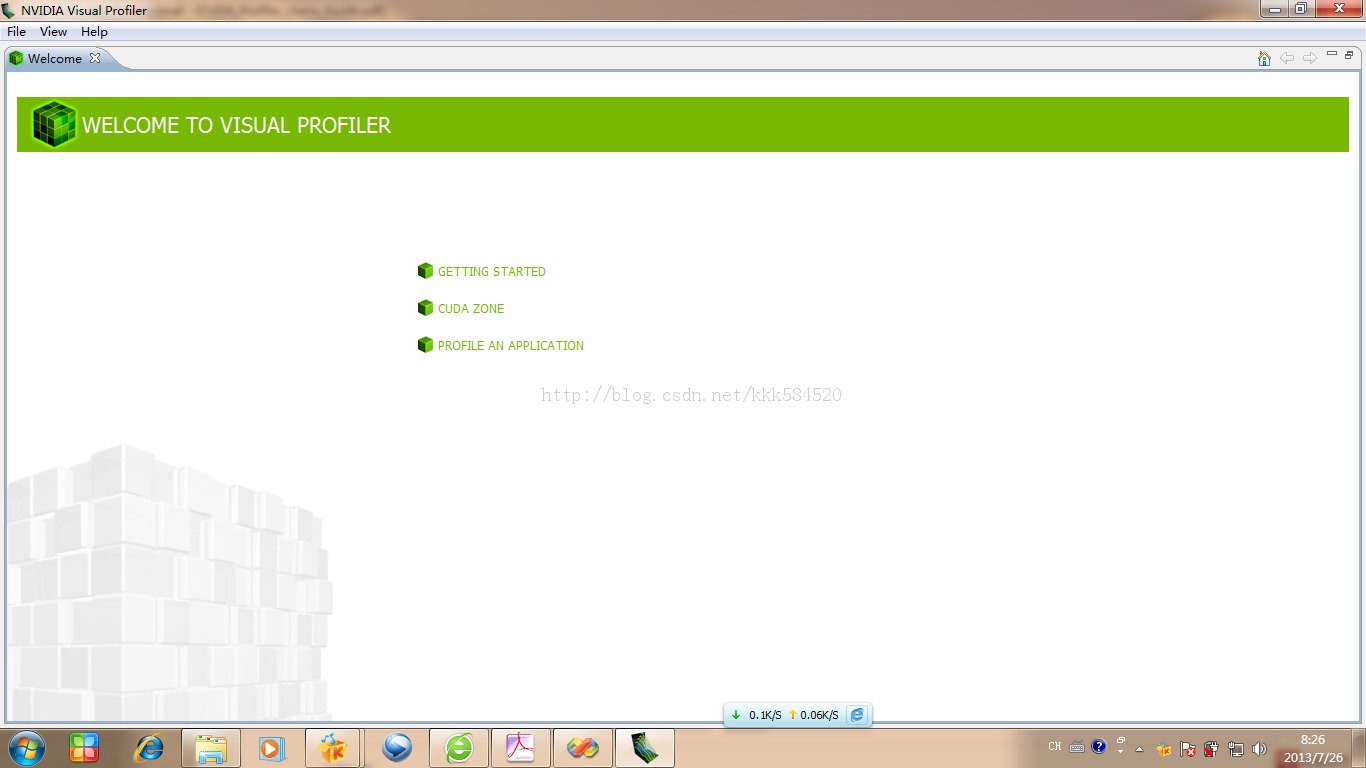

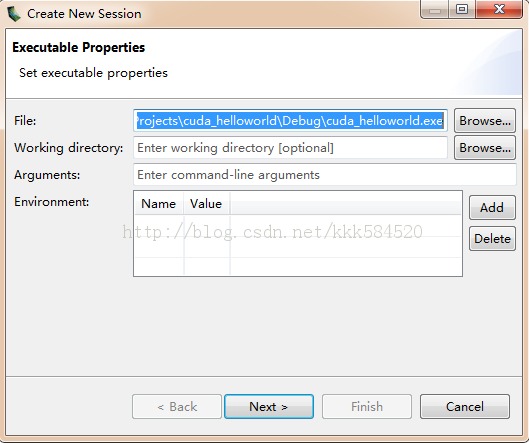

// cudaThreadExit must be called before exiting in order for profiling and

// tracing tools such as Nsight and Visual Profiler to show complete traces.

cudaStatus = cudaThreadExit();

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "cudaThreadExit failed!");

return 1;

}

for(int i = 0;i<arraySize;i++)

{

if(c[i] != (a[i]+b[i]))

{

printf("Error in %d\n",i);

}

}

return 0;

}

// Helper function for using CUDA to add vectors in parallel.

cudaError_t addWithCuda(int *c, const int *a, const int *b, size_t size)

{

int *dev_a = 0;

int *dev_b = 0;

int *dev_c = 0;

cudaError_t cudaStatus;

// Choose which GPU to run on, change this on a multi-GPU system.

cudaStatus = cudaSetDevice(0);

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "cudaSetDevice failed! Do you have a CUDA-capable GPU installed?");

goto Error;

}

// Allocate GPU buffers for three vectors (two input, one output) .

cudaStatus = cudaMalloc((void**)&dev_c, size * sizeof(int));

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "cudaMalloc failed!");

goto Error;

}

cudaStatus = cudaMalloc((void**)&dev_a, size * sizeof(int));

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "cudaMalloc failed!");

goto Error;

}

cudaStatus = cudaMalloc((void**)&dev_b, size * sizeof(int));

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "cudaMalloc failed!");

goto Error;

}

// Copy input vectors from host memory to GPU buffers.

cudaStatus = cudaMemcpy(dev_a, a, size * sizeof(int), cudaMemcpyHostToDevice);

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "cudaMemcpy failed!");

goto Error;

}

cudaStatus = cudaMemcpy(dev_b, b, size * sizeof(int), cudaMemcpyHostToDevice);

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "cudaMemcpy failed!");

goto Error;

}

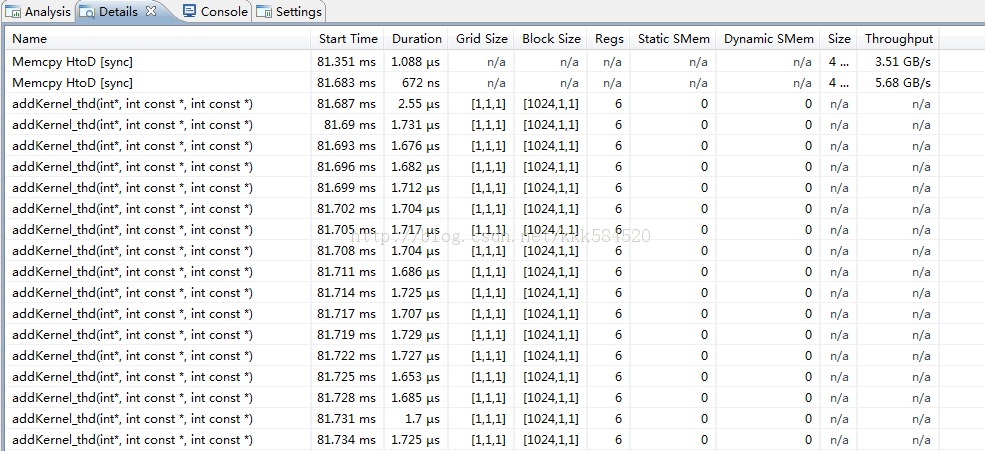

cudaEvent_t start,stop;

cudaEventCreate(&start);

cudaEventCreate(&stop);

cudaEventRecord(start,0);

for(int i = 0;i<1000;i++)

{

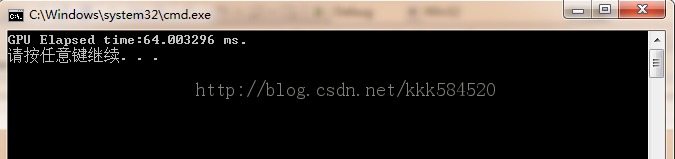

// addKernel_blk<<<size,1>>>(dev_c, dev_a, dev_b);

addKernel_thd<<<1,size>>>(dev_c, dev_a, dev_b);

}

cudaEventRecord(stop,0);

cudaEventSynchronize(stop);

float tm;

cudaEventElapsedTime(&tm,start,stop);

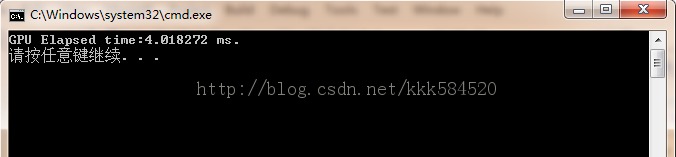

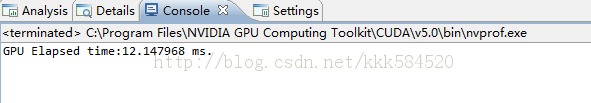

printf("GPU Elapsed time:%.6f ms.\n",tm);

// cudaThreadSynchronize waits for the kernel to finish, and returns

// any errors encountered during the launch.

cudaStatus = cudaThreadSynchronize();

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "cudaThreadSynchronize returned error code %d after launching addKernel!\n", cudaStatus);

goto Error;

}

// Copy output vector from GPU buffer to host memory.

cudaStatus = cudaMemcpy(c, dev_c, size * sizeof(int), cudaMemcpyDeviceToHost);

if (cudaStatus != cudaSuccess)

{

fprintf(stderr, "cudaMemcpy failed!");

goto Error;

}

Error:

cudaFree(dev_c);

cudaFree(dev_a);

cudaFree(dev_b);

return cudaStatus;

}