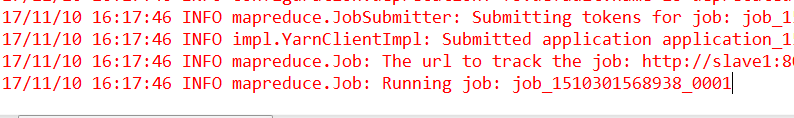

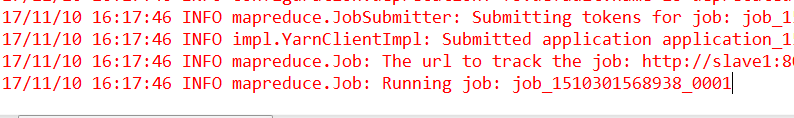

数据表不大就20多兆,任务一直卡住不动,在Hadoop的8088页面,任务也是卡住不执行

这个是源代码:

private static int importDataFromMysql() throws Exception {

String[] args = new String[] {

"--connect","jdbc:mysql://10.0.37.43:3306/rocket",

"--driver","com.mysql.jdbc.Driver",

"-username","root",

"-password","root",

"--table","properties1",

"-m","1",

"--target-dir","/sqoop/properties3"};

String[] expandArguments = OptionsFileUtil.expandArguments(args);

SqoopTool tool = SqoopTool.getTool("import");

Configuration conf = new Configuration();

conf.set("fs.default.name", "hdfs://192.168.88.129:9000");//设置HDFS服务地址

conf.set("hadoop.job.user", "root");

conf.set("mapreduce.framework.name", "yarn");

conf.set("yarn.resourcemanager.hostname", "192.168.88.129");

conf.set("yarn.resourcemanager.address", "192.168.88.129:8032");

conf.set("yarn.resourcemanager.resource-tracker.address",

"192.168.88.129:8031");

conf.set("yarn.resourcemanager.scheduler.address", "192.168.88.129:8030");

conf.set("mapreduce.app-submission.cross-platform", "true");

Configuration loadPlugins = SqoopTool.loadPlugins(conf);

Sqoop sqoop = new Sqoop((com.cloudera.sqoop.tool.SqoopTool) tool, loadPlugins);

return Sqoop.runSqoop(sqoop, expandArguments);

}

发帖

发帖 与我相关

与我相关 我的任务

我的任务 分享

分享